March Madness is not exclusive to basketball.

March Madness signals the season for standardized testing season here in Connecticut.

March Madness signals the tip-off for testing in 23 other states as well.

All CT school districts were offered the opportunity to choose the soon-to-be-phased-out pen and paper grades 3-8 Connecticut Mastery Tests (CMT)/ grade 10 Connecticut Academic Performance Test (CAPT) OR to choose the new set of computer adaptive Smarter Balanced Tests developed by the federally funded Smarter Balanced Assessment Consortium (SBAC). Regardless of choice, testing would begin in March 2014,

As an incentive, the SBAC offered the 2014 field test as a “practice only”, a means to develop/calibrate future tests to be given in 2015, when the results will be recorded and shared with students and educators. Districts weighed their choices based on technology requirements, and many chose the SBAC field test. But for high school juniors who had completed the pen and paper CAPT in 2013, this is practice; they will receive no feedback. This 2014 SBAC field test will not count.

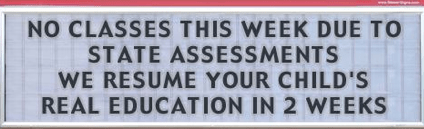

Unfortunately, the same can not be said for counting the 8.5 hours of testing in English/language arts and mathematics that had to be taken from 2014 academic classes. The elimination of 510 minutes of instructional time is complicated by scheduling students into computer labs with hardware that meets testing specifications. For example, rotating students alphabetically through these labs means that academic classes scheduled during the testing windows may see students A-L one day, students M-Z on another. Additional complications arise for mixed grade classrooms or schools with block schedules. Teachers must be prepared with partial lessons or repeating lessons during the two week testing period; some teachers may miss seeing students for extended periods of time. Scheduling madness.

For years, the state standardized test was given to grade 10, sophomore students. In Connecticut, the results were never timely enough to deliver instruction to address areas of weakness during 10th grade, but they did help inform general areas of weakness in curriculum in mathematics, English/language arts, and science. Students who had not passed the CAPT had two more years to pass this graduation requirement; two more years of education were available to address specific student weaknesses.

In contrast, the SBAC is designed to given to 11th graders, the junior class. Never mind that these junior year students are preparing to sit for the SAT or ACT, national standardized tests. Never mind that many of these same juniors have opted to take Advanced Placement courses with testing dates scheduled for the first two full weeks of May. On Twitter feeds, AP teachers from New England to the mid-Atlantic are already complaining about the number of delays and school days already lost to winter weather (for us 5) and the scheduled week of spring break (for us, the third week of April) that comes right before testing for these AP college credit exams. There is content to be covered, and teachers are voicing concerns about losing classroom seat time. Madness.

Preparing students to be college and career ready through the elimination of instructional time teachers use to prepare students for college required standardized testing (SAT, ACT) is puzzling, but the taking of instructional time so students can take state mandated standardized tests that claim to measure preparedness for college and career is an exercise in circular logic. Junior students are experiencing an educational Catch 22, they are practicing for a test they will never take, a field test that does not count. More madness.

In addition, juniors who failed the CT CAPT in grade 10 will still practice with the field test in 2014. Their CAPT graduation requirement, however, cannot be met with this test, and they must still take an alternative assessment to meet district standards. Furthermore, from 2015 on, students who do not pass SBAC will not have two years to meet a state graduation requirement; their window to meet the graduation standard is limited to their senior year. Even more madness.

Now, on the eve of the inaugural testing season, a tweet from SBAC itself (3/14):

This tweet was followed by word from CT Department of Education Commissioner Stefan Pryor’s office sent out on to superintendents from Dianna Roberge-Wentzell, DRW, that the state test will be delayed a week:

Schools that anticipated administering the Field Test during the first week of testing window 1 (March 18 – March 24) will need to adjust their schedule. It is possible that these schools might be able to reschedule the testing days to fall within the remainder of the first testing window or extend testing into the first week of window 2 (April 7 – April 11).

Education Week blogger Stephen Sawchuk provides more details in his post Smarter Balanced Group Delays in the explanation for the delay:

The delay isn’t about the test’s content, officials said: It’s about ensuring that all the important elements, including the software and accessibility features (such as read-aloud assistance for certain students with disabilities) are working together seamlessly.

“There’s a huge amount of quality checking you want to do to make sure that things go well, and that when students sit down, the test is ready for them, and if they have any special supports, that they’re loaded in and ready to go,” Jacqueline King, a spokeswoman for Smarter Balanced, said in a March 14 interview. “We’re well on our way through that, but we decided yesterday that we needed a few more days to make sure we had absolutely done all that we could before students start to take the field tests.”

A few more days is what teachers who carefully planned alternative lesson plans during the first week of the field test probably want in order to revise their lessons. The notice that districts “might be able to reschedule” in the CT memo is not helpful for a smooth delivery of curriculum, especially since school schedules are not developed empty time slots available to accommodate “willy-nilly testing” windows. There are field trips, author visits, assemblies that are scattered throughout the year, sometimes organized years in advance. Cancellation of activities can be at best disappointing, at worst costly. Increasing madness.

Added to all this madness, is a growing “opt-out” movement for the field test. District administrators are trying to address this concern from the parents on one front and the growing concerns of educators who are wrestling with an increasingly fluid schedule. According to Sarah Darer Littman on her blog Connecticut News Junkie, the Bethel school district offered the following in a letter parents of Bethel High School students received in February:

“Unless we are able to field test students, we will not know what assessment items and performance tasks work well and what must be changed in the future development of the test . . . Therefore, every child’s participation is critical.

For actively participating in both portions of the field test (mathematics/English language arts), students will receive 10 hours of community service and they will be eligible for exemption from their final exam in English and/or Math if they receive a B average (83) or higher in that class during Semester Two.”

Field testing as community service? Madness. Littman goes on to point out that research shows that a student’s GPA is a better indicator of college success than an SAT score and suggests an exemption raises questions about a district’s value on standardized testing over student GPA, their own internal measurement. That statement may cause even more madness, of an entirely different sort.

Connecticut is not the only state to be impacted by the delay. SBAC states include: California, Delaware, Hawaii, Idaho, Iowa, Maine, Michigan, Missouri, Montana, Nevada, New Hampshire, North Carolina, North Dakota, Oregon, Pennsylvania, South Carolina, South Dakota, U.S. Virgin Islands, Vermont, Washington, West Virginia, Wisconsin, Wyoming.

In the past, Connecticut has been called “The Land of Steady Habits,” “The Constitution State,” “The Nutmeg State.” With SBAC, we could claim that we are now a “A State of Madness,” except for the 23 other states that might want the same moniker. Maybe we should compete for the title? A kind of Education Bracketology just in time for March Madness.

As the 10th grade English teacher, Linda’s role had been to prepare students for the rigors of the State of Connecticut Academic Performance Test, otherwise known as the CAPT. She had been preparing students with exam-released materials, and her collection of writing prompts stretched back to 1994. Now that she will be retiring, it is time to clean out the classroom. English teachers are not necessarily hoarders, but there was evidence to suggest that Linda was stocked with enough class sets of short stories to ensure students were always more than adequately prepared. Yet, she was delighted to see these particular stories go.

As the 10th grade English teacher, Linda’s role had been to prepare students for the rigors of the State of Connecticut Academic Performance Test, otherwise known as the CAPT. She had been preparing students with exam-released materials, and her collection of writing prompts stretched back to 1994. Now that she will be retiring, it is time to clean out the classroom. English teachers are not necessarily hoarders, but there was evidence to suggest that Linda was stocked with enough class sets of short stories to ensure students were always more than adequately prepared. Yet, she was delighted to see these particular stories go.