Notice how I am trying to beat the character limit on headlines?

Here’s the translation:

For your information, Juniors: Connecticut’s Common Core State Standards Smarter Balanced Assessment [Consortium] is Dead on Arrival; Insert Scholastic Achievement Test

Yes, in the State of Connecticut, the test created through the Smarter Balanced Assessment Consortium (SBAC) based on the Common Core State Standards will be canceled for juniors (11th graders) this coming school year (2015-16) and replaced by the Scholastic Achievement Test (SAT).

The first reaction from members of the junior class should be an enormous sigh of relief. There will be one less set of tests to take during the school year. The second sigh will come from other students, faculty members, and the administrative team for two major reasons-the computer labs will now be available year round and schedules will not have to be rearranged for testing sessions.

SAT vs. SBAC Brand

In addition, the credibility of the SAT will most likely receive more buy-in from all stakeholders. Students know what the brand SAT is and what the scores mean; students are already invested in doing well for college applications. Even the shift from the old score of 1600 (pre-2005) to 2400 with the addition of an essay has been met with general understanding that a top score is 800 in each section (math, English, or essay). A student’s SAT scores are part of a college application, and a student may take the SAT repeatedly in order to submit the highest score.

In contrast, the SBAC brand never reported student individual results. The SABC was created as an assessment for collecting data for teacher and/or curriculum evaluation. When the predictions of the percentage of anticipated failures in math and English were released, there was frustration for teachers and additional disinterest by students. There was no ability to retake, and if predictions meant no one could pass, why should students even try?

Moreover, while the SBAC drove the adoption of digital testing in the state in grades 3-8, most of the pre-test skill development was still given in pen and pencil format. Unless the school district consistently offered a seamless integration of 1:1 technology, there could be question as to what was being assessed-a student’s technical skills or application of background knowledge. Simply put, skills developed with pen and pencils may not translate the same on digital testing platforms.

As a side note, those who use computer labs or develop student schedules will be happy to know that SAT is not a digital test….at least not yet.

US Education Department Approved Request

According to an early report (2006) by The Brooking’s Institute, the SBAC’s full suite of summative and interim assessments and the Digital Library on formative assessment was first estimated to cost $27.30 per student (grades 3-11). The design of the assessment would made economical if many states shared the same test.

Since that intial report, several states have left the Smarter Balanced Consortium entirely.

In May, the CT legislature voted to halt SBAC in grade ii in favor of the SAT. This switch will increase the cost of testing.According to an article (5/28/15) in the CT Mirror “Debate Swap the SAT for the Smarter Balanced Tests” :

“‘Testing students this year and last cost Connecticut $17 million’, the education department reports. ‘And switching tests will add cost,’ Commissioner of Education Dianna Wentzell said.”

This switch was approved by the U.S. Department of Education for Connecticut schools Thursday, 8/6/15, the CT Department of Education had asked it would not be penalized under the No Child Left Behind Act’s rigid requirements. Currently the switch for the SAT would not change the tests in grades 3-8; SBAC would continue at these grade levels.

Why SBAC at All?

All this begs the question, why was 11th grade selected for the SBAC in the first place? Was the initial cost a factor?

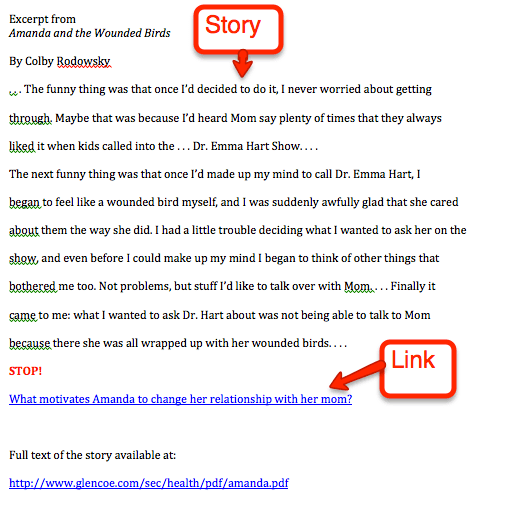

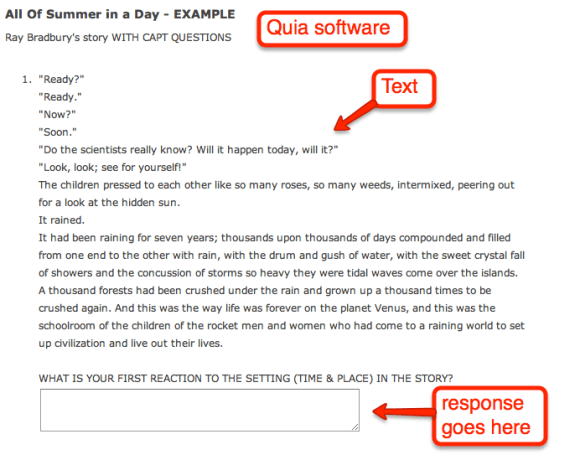

Since the 1990s, the State of Connecticut had given the Connecticut Achievement Performance Test (CAPT) in grade 10, and even though the results were reported late, there were still two years to remediate students who needed to develop skills. In contrast, the SBAC was given the last quarter of grade 11, leaving less time to address any low level student needs. I mentioned these concerns in an earlier post: The Once Great Junior Year, Ruined by Testing.

Moving the SBAC to junior year increased the amount of testing for those electing to take the SAT with some students taking the ASVAB (Armed Services Vocational Aptitude Battery) or selected to take the NAEP (The National Assessment of Educational Progress).

There have been three years of “trial testing” for the SBAC in CT and there has been limited feedback to teachers and students. In contrast, the results from the SAT have always been available as an assessment to track student progress, with results reported to the school guidance departments.

Before No Child Left Behind, before the Common Core State Standards, before SBAC, the SAT was there. What took so them (legislature, Department of Education, etc) so long?

Every Junior Will Take the NEW SAT

In the past, not every student elected to take the SAT test, but many districts did offer the PSAT as an incentive. This coming year, the SAT will be given to every 11th grader in Connecticut.

The big wrinkle in this plan?

The SAT test has been revised (again) and will be new in March 2016.

What should we expect with this test?

My next headline?